2 Game Theory and Mixed-Motive Settings

As mentioned in the introductory section, cooperative AI uses many tools and concepts from game theory, a mathematical framework for modelling strategic interactions. In this section, we’ll introduce you to some of the most important tools and concepts, and use these to get a sense for the kind of problems, settings and systems that the field of cooperative AI tends to focus on, and why.

Tooltip Text

Cooperation and Competition

In game theory, multi-agent settings can be classified into three categories:

- Fully cooperative: all agents have shared objectives, where what is “good” for one agent is always equally good for another agent.

- Fully competitive: all agents have opposing objectives, where what is “good” for one agent is always bad for all the others (also referred to as zero-sum or constant-sum games).

- Mixed-motive: agents have some overlap in objectives but also some conflicting interests.

Virtually all interactions you have with other human beings could be described as mixed-motive. In a family, a sports team, a classroom or a workplace you will have different people with different goals that are not perfectly aligned with each other, but neither perfectly opposed.

Fully cooperative and fully competitive settings are popular research topics in AI (for example, some of the most talked about breakthroughs in recent AI history are to do with performance in zero-sum games like Go). There are many reasons for this, such as:

- There is a clear, unambiguous sense of what counts as good performance, and they are simpler to analyse mathematically and build scalable algorithms for.

- Board games and video games are natural test environments for AI systems and are often zero-sum.

- Applications with a single AI deployer are often fully cooperative (e.g. teams of robots in a warehouse).

However, it’s important to note that "fully cooperative" and "fully competitive" settings only really exist within the realm of game theory or idealised settings like board games and sandboxed industrial settings. For real-world interactions between human entities, it is better to think in terms of a "mostly competitive" to "mostly cooperative" scale.

This brings us to the notion of preference alignment: the degree to which the goals of agents in a particular setting are similar. Other ways of saying this might be: the degree to which agents are on the same team, the degree to which agents want the same outcomes as each other, or the degree of similarity between all the agents' goals. Lewis Hammond touches on this concept in the first lecture of the previous section (during this part of the presentation).

Tooltip Text

Place the following scenarios on a scale of most cooperative to most competitive and briefly explain your reasoning:

- Players in a game of chess.

- Two groups of academic researchers each working on projects to find a cure to a particular disease.

- A group of people all fishing from the same lake, each to feed themselves and their family.

- A fleet of autonomous robots, all deployed by the same company, moving stock around a warehouse.

Come up with 3-4 more multi-agent scenarios, real or hypothetical, and rank them as you have done above.

Tooltip Text

Consider a game of football. Each team appears to be in a fully cooperative setting: players want their team to win and if a perfect strategy was known, players would surely play their assigned roles regardless of what their role was. Is each team in a football game a fully cooperative setting? If not, what external factors are we not considering that should shift this from a fully cooperative to a mixed-motive setting?

Tooltip Text

Fully competitive settings are not of interest to the cooperative AI field. This is because there is no room for overall cooperation or mutual benefit (though subgroups of agents can of course cooperate at the expense of others). Conflict is inevitable. Fortunately, fully competitive settings are also quite rare. The rest of this section will therefore focus on the mixed-motive and fully cooperative end of the spectrum.

The possible challenges and failures that agents in mixed-motive and fully cooperative settings face are different but there is some overlap. The following piece, “A Review of Cooperation in Multi-agent Learning”, introduces cooperation between learning agents and contrasts fully cooperative settings against mixed-motive settings with some real-world examples. The section we recommend reading presents the challenges that are faced by learning agents in cooperative and mixed-motive settings.

Tooltip Text

Section 5 on cooperation with mixed motivation goes into more technical detail and is optional reading. It more rigorously defines a social dilemma (which you will be introduced to later) and presents ways to solve them.

Tooltip Text

5 Cooperation with mixed motivation

Tooltip Text

Consider the solution approaches presented in section 5 of ‘A Review of Cooperation in Multi-agent Learning’. Write a one-sentence summary of the idea behind each of these suggested solutions.

Tooltip Text

Consider the following hypothetical scenario: advanced AI agents working on behalf of different companies manage their own resource-intensive data centres. They use water from local rivers, lakes and reservoirs and the AI agents have influence over their demand by how intensely they run their systems, how much they expand their data centres, etc. They may even be able to lean on political and financial leverage to influence government and water companies. What are the failure modes here and how could a system of reputation and norms be established to improve cooperation among these agents. Evaluate the feasibility of introducing such a system.

Tooltip Text

The following piece, section 2.2 of ‘Multi-Agent Risks from Advanced AI’, provides a more in-depth explanation of why mixed-motive interactions are such an important aspect of multi-agent AI safety.

Tooltip Text

Decomposing Games into a Cooperative and Competitive Component Optional

This section is a small optional walkthrough of how to decompose any two-player normal-form game into a fully cooperative component and a fully competitive component. It is based on the work of Adam Kalai and Ehud Kalai (here is an accessible link). This part is optional and quite technical. It is best for those with a solid background in game theory and solution concepts (you will need to understand the minimax concept to continue).

Any two-player game G with payoff matrices (A, B) (one for each player) can be decomposed into two components:

- The zero-sum game Gz where Player 1 receives D = (A − B) / 2 and Player 2 receives −D. This captures pure competition: whatever one player gains, the other loses.

- The team game Gt with payoffs C = (A + B) / 2 for both players. In this game, both players have identical payoffs, so their interests are perfectly aligned.

You can verify that adding these two components recovers the original game: Player 1’s payoff is C + D = (A + B)/2 + (A − B)/2 = A, and Player 2’s payoff is C − D = (A + B)/2 − (A − B)/2 = B.

The decomposition can help us to understand the structure of the game. One useful concept that utilises the decomposition is the coco value. Assuming the utility in the game is transferable (for example, the utility represents a cash payment) and the two players in the game can cooperate on playing the strategies which maximise sum-total welfare, then the coco value suggests a fair way to distribute this welfare among the two players (fair with respect to the player’s competitive advantage over each other). It is defined for each player i as follows:

cocoi = maxmax(Gt) + minmax(Gz)i

where maxmax(Gt) is the maximum sum-total welfare (the largest value of (A + B)/2 across all strategy profiles), and minmax(Gz)i is the ith component of the minimax value of the zero-sum game G z. Note that unless minmax(Gz) = maxmin(Gz) over pure strategies, finding minmax(G_z) will require the consideration of mixed strategies.

Tooltip Text

Two firms are negotiating a joint venture. Firm 1 (the row player) can choose strategy T or B. Firm 2 (the column player) can choose strategy L, M, or R. The game is given below, with each cell showing (Firm 1 payoff, Firm 2 payoff):

a) Compute the team game Gt by calculating C = (A + B) / 2 for each cell.

b) Compute the zero-sum game Gz by calculating D = (A − B) / 2 for each cell. This is Player 1’s payoff matrix; Player 2’s is −D.

c) Compute the coco value: (c*/2 + z, c*/2 − z). Which player receives more than half the cooperative surplus, and by how much?

Suppose the two firms can propose and sign a binding contract that specifies which strategy profile to play and how the utility should be divided.

d) Suppose Player 2 proposes splitting the maximum total welfare equally: each player receives c*/2. Examine the zero-sum game Gz. Can Player 1 guarantee themselves a positive payoff in Gz through their choice of strategy? What does this imply about whether Player 1 should accept an equal split of the total welfare?

e) Now suppose Player 1 proposes receiving c*/2 + z (their coco value). Could Player 2 credibly threaten to do better? Consider what happens in the zero-sum game when Player 2 tries to minimise Player 1’s competitive advantage.

Tooltip Text

Firm 2 from the previous exercise now develops a new option, strategy S, leading to the following expanded game:

a) Compute the team game Gt and zero-sum game Gz.

b) Compute the coco value.

c) Notice that strategy S does not change the maximum total welfare compared to the game in the previous exercise. Nevertheless, S does change the coco value. Explain in your own words why a strategy that does not improve the cooperative outcome can still shift the division of surplus between the players.

Tooltip Text

Basic Game Theory for Cooperative AI

This subsection provides a basic introduction to concepts from game theory that are relevant to cooperative AI. If you are familiar with game theory, feel free to skip the three suggested readings below, but not the more detailed discussions of coordination problems, bargaining problems and social dilemmas as these are important to understanding the priorities of the cooperative AI field.

Game theory abstracts situations involving multiple agents by modelling them as games with players, strategies and payoffs. Each player could be an individual, group, or AI agent. Payoffs, also known as utilities or rewards, are a way of representing the preferences players have over all the different combinations of strategies that players could adopt. The following provide a quick and accessible overview of the core terms and concepts from game theory.

Tooltip Text

A particularly important concept for cooperative AI from game theory is Pareto efficiency, as it serves as a foundation for many formal definitions of cooperation.

Tooltip Text

All parts

Tooltip Text

The prisoner’s dilemma is the most famous example in game theory and is useful for understanding the basic concepts you’ve seen so far.

Tooltip Text

The following video also provides a basic introduction to game theory, but in the context of cooperative AI. Feel free to skip or play most of it at a higher speed as it covers a lot of what has been introduced above, but it can be useful to see the concepts contextualised within the cooperative AI field.

Tooltip Text

Two people are playing a game to split £1 (for those less familiar with British currency, £1 = 100 pence, written 100p). The game goes as follows: both players simultaneously announce how much of the £1 they would like. If the sum of the announced shares is less than £1, they both receive their share, but if it is strictly greater than £1, then they both receive nothing. Which of the following are Nash equilibria for this game:

- Both players ask for 50p each.

- 1 player asks for 20p and the other 80p.

- 1 player asks for 40p and the other 50p.

- Both players ask for £1.

Tooltip Text

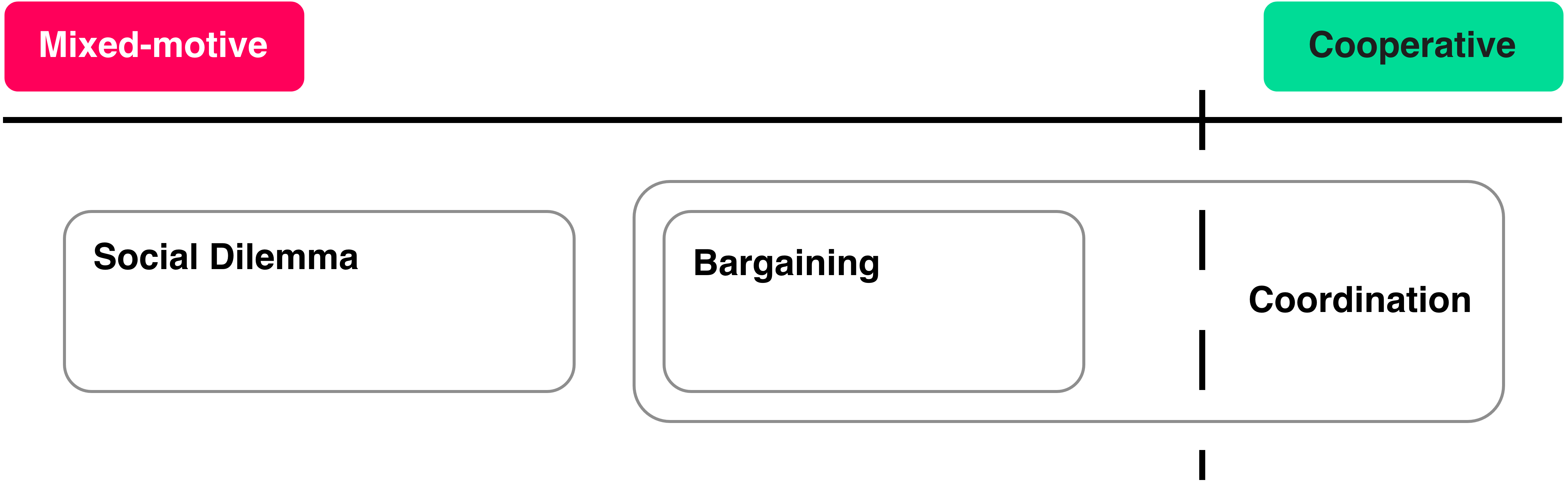

Now we’ll introduce three kinds of games: a coordination problem, bargaining problem and social dilemma. You may have already come across these terms in your reading so far. They are important types of multi-agent situations to comprehend for understanding the priorities of the cooperative AI field. We start with a technical definition of each kind of game, and then provide examples to help grasp the core ideas.

Tooltip Text

(You may come across many subtly different definitions for the terms below, but these are what we’ll be working with in the curriculum.)

Coordination Problem

- A coordination problem is a game involving at least two Pareto-optimal Nash equilibria, and to achieve one of them, the agents must coordinate (i.e. pick compatible strategies). Put another way, if the agents play strategies for different Pareto-optimal outcomes, they will reach a Pareto-dominated outcome.

- For a two-player game, this can be formalised as follows: a game is a coordination problem if there exists at least one pair of Pareto-optimal Nash equilibria (a, b) and (c, d), such that (a, d) or (c, b) are Pareto-dominated.

Don’t worry if the above doesn’t make a lot of sense yet. There are two types of coordination problems that are most important for you to understand: a pure coordination problem and a bargaining problem.

Pure Coordination Problem

- A pure coordination problem is a coordination problem where agents have identical preferences over the Pareto-optimal outcomes. That is, they don’t care which Pareto-optimal Nash equilibrium is coordinated on, only that any one is coordinated on.

The prototypical example of a pure coordination problem is two people walking down a narrow pavement. Here, the “Pareto-optimal Nash equilibria" are: both people walk on their left or both people walk on their right. If they miscoordinate and walk on the same side of the pavement they will collide. A higher-stakes class of pure coordination problems are establishing technology standards. For example, there are typically many different ways a connector or port could be designed that everyone would be roughly equally happy with, but it’s best if we all agree to use only one of them.

In these cases, the only problem is agreeing on any compatible, Pareto-efficient set of actions. To do this, a communication protocol or shared convention might be set up (or evolve organically over time). For example, people in the UK typically gravitate to walking on the left side of the pavement in line with the side of the road that cars drive on. In coordination problems, improving the capabilities of individual agents (better communication, better perception, more knowledge and access to information) is often sufficient to solve the problem. Once agents can reliably observe each other or share a convention, the problem largely dissolves.

Pure coordination problems are quite rare in the real-world. Even problems that might seem like pure coordination problems actually involve a lot of mixed motives. For example, after taking into account some practical considerations, choosing where the prime meridian is on earth is seemingly arbitrary, and having a universally agreed prime meridian is of course better than having none at all. But the choice of course does have geopolitical implications. In fact, at the International Meridian Conference held in October 1884 to determine a prime meridian for international use, France argued for a “neutral” line in somewhere such as Azores or the Bering Strait, abstained from the vote and continued to use the Paris meridian until 1911 (see here for more details).

Bargaining Problem

- A bargaining problem is a mixed-motive coordination problem where the agents have different preferences over the Pareto-optimal outcomes requiring coordination.

- For a two-player game, this can be formalised as follows: a game is a bargaining problem if there exist distinct Pareto-optimal Nash equilibria (a, b) and (a’, b’) such that (a, b’) or (a’, b) is Pareto-dominated and one player prefers (a, b) to (a’, b’).

- Chicken and Bach or Stravinsky (also known as Battle of the Sexes) are the classic bargaining problems from game theory.

Take an intersection on the road as an example, and suppose two cars are approaching. Both want to avoid a crash, but each would prefer to be the one that goes first. In these reasonably low-stakes, repeated scenarios, the solution is to fairly distribute who is favoured over the long term. For example, traffic lights typically swap evenly between which lanes are allowed to move and which must wait at busy intersections.

Tooltip Text

For a higher-stakes example, consider two nations negotiating over which country will host a major international institution, such as the headquarters of a new regulatory body. Both nations benefit from the institution existing, but the hosting country gains disproportionate influence and economic benefits, and the headquarters can only be in one place. Both this and coordinating cars on the road are bargaining problems, but this new situation is much harder to solve in a way that keeps all agents happy.

Tooltip Text

Bargaining problems are more socially demanding than pure coordination problems. Raw capability improvements might not necessarily be enough. Typically what's needed is something like a fair coordination device: a traffic light, a convention, a compromise or long-term agreement. The complexity of this device, or the difficulty of setting it up and maintaining it, depends on a few factors. We’ve already seen in the exercises above how factors like time horizon and the magnitude of the difference in preferences between the Pareto-optimal outcomes can have a large effect on the difficulty of bargaining problems and the efficacy of a coordination device. The problems caused by mixed motivations are most acute in social dilemmas, the final class of games we will introduce.

Social Dilemma

- A social dilemma is where Pareto-optimal outcomes exist (known as mutual cooperation or cooperative outcomes), but players are individually incentivised to avoid them, even if they knew that the other agents would behave in accordance with them.

- A game-theoretic way of saying this is that the Nash equilibria of the game are not Pareto-optimal.

- The Prisoners’ Dilemma and the Public Goods Game are the prototypical examples of a social dilemma.

Social dilemmas crop in the real-world frequently, from maintaining public spaces to arms races. In fact, there is a brilliant list of examples on the Wikipedia page for the ‘Tragedy of the Commons’.

Tooltip Text

Read the examples of social dilemmas given on the ‘Tragedy of the Commons’ Wikipedia page. Pick one, and explain in detail how it constitutes a social dilemma. Make sure to describe the outcome that would be best for the collective and what is the individual incentive that makes this hard to achieve. What are things that humans have done or could do to try and solve the social dilemma you’ve chosen?

Tooltip Text

Let’s walk through a particular example of a social dilemma and explain how it differs from the previous two types of game. Take a group of fishing communities sharing a lake, where each community benefits from fishing more, but if everyone fishes as much as they'd individually like to, the fish stock collapses and everyone is worse off. In this situation the problem is that each agent is individually incentivised to not restrain their catch, even if they know others are cooperating (in fact, especially if they know others are cooperating!). The cooperative outcome—where everyone fishes a modest amount—is not a Nash equilibrium, meaning there is always a temptation to defect by fishing more. This contrasts with a coordination problem where an agent would be individually incentivised to act compatibly if they knew which coordinated outcome all other agents planned to pursue.

This distinction has an important consequence. Unlike coordination problems, providing agents with better information, better communication, or simply more intelligence isn’t enough to solve a social dilemma. The fishing communities already understand the situation perfectly. Giving them better forecasts of fish populations, or faster communication, does not remove the underlying incentive to overfish. In fact, improving the capabilities of individual agents may even make things worse if those capabilities are used to find more sophisticated ways to exploit others. What's needed instead is something that changes the incentive structure: monitoring and enforcement of catch limits, systems of reputation that punish over-fishers, binding agreements, or norms backed by sanctions. These are fundamentally more demanding to design, implement, and maintain, and hence more costly and fragile.

Tooltip Text

Consider the following scenarios, and label them on a scale of mostly-competitive, mixed-motive and mostly-cooperative, and note whether or not they are a pure coordination problem, bargaining problem or social dilemma.

- A group of people all fishing from the same lake, each to feed themselves and their family.

- Two friends who want to meet in town but can't communicate. They only care about meeting each other, not where they meet.

- Same scenario as above, but now the friends do have different preferences over where they meet.

- A rugby team.

- Climate negotiations, where nations must agree on how to distribute the costs and benefits of emissions reductions.

- Two countries negotiating how to share the water resources of a river that crosses their border.

Tooltip Text

Those who are very familiar with the basics of game theory might find the following optional content interesting. It provides a more rigorous exploration of coordination problems, bargaining problems and (sequential) social dilemmas, and breaks bargaining problems down into two types: symmetric and asymmetric. It’s a good example of developing and evaluating solutions for the different types of settings an agent may find themselves in, focussing mainly on the idea of “norm-adaptiveness”.

Tooltip Text

Why Cooperative AI Focuses on Particular Mixed-motive Settings

Tooltip Text

Now let's summarise what we’ve read and present a general overview of why the field of cooperative AI tends to focus on what it does (at least as we see it at the Cooperative AI Foundation).

- Highly competitive or zero-sum settings are rare and there is no room for overall cooperation or mutual benefit. Improving outcomes in fully cooperative settings and pure coordination problems are likely a default outcome of general AI progress. Therefore, these settings are typically of little interest to the field.

- Mixed-motive problems are common, and high-stakes bargaining problems and social dilemmas don’t simply disappear as agents become more generally capable.

- If we imagine that advanced and influential AI agents will be somewhat aligned with subsets of humans (e.g. AI assistants for a company or AI delegates for a nation’s government) with different goals, then we should expect AI agent interactions to be mixed-motive too.

- Not only this, but some of humanity's greatest problems, like climate change and international conflict, are because of a strong tension between individual incentives and the collective good, rather than problems of coordination alone.

- This is why cooperative AI tends to focus on finding general and context-specific methods to solve the most challenging mixed-motive situations.

Tooltip Text

What do you think about the priorities of the cooperative AI field as they are presented above? Do you agree with all the assumptions and conclusions?

Tooltip Text

Roads of self-driving and human operated cars is a popular multi-agent system to study. Why do you think the field of cooperative AI does not focus on these multi-agent systems?

Tooltip Text

Read Project Vend: Can Claude run a small shop? (And why does that matter?). Why do you think the LLM agent in this setting behaved the way it did? If a company wanted to deploy LLM agents like this, what methods could they use to improve their performance?

Tooltip Text

Currently, there is ongoing work to develop autonomous underwater vehicles (AUV) for military applications. They are used for surveillance of undersea infrastructure, reconnaissance, and detecting hostile activities. Theoretically, as these vehicles become more sophisticated, interactions between AUVs of different nations could be incredibly significant to cooperation successes and failures between those nations. Can you think of other present-day or hypothetical future examples of interactions between other kinds of AI agents that might play a significant role in the success or failure of international cooperation?

Tooltip Text

This section has mainly focussed on concepts from game theory, and how they can be useful for thinking about cooperative AI. While game theory provides important tools for cooperative AI research, it is by no means the only approach for studying multi-agent safety. The next section will focus on a different perspective that instead draws more on tools from complex systems theory.

If you are interested in learning more about game theory for cooperative AI research specifically, we highly recommend working through the ‘Foundations of Cooperative AI’ course material by Vincent Conitzer.

Tooltip Text